The Quiet Displacement

What Anthropic’s report on AI and jobs tells us, and what it may be steering us toward instead

Most discussions about AI and work still revolve around one big question: will AI replace jobs?

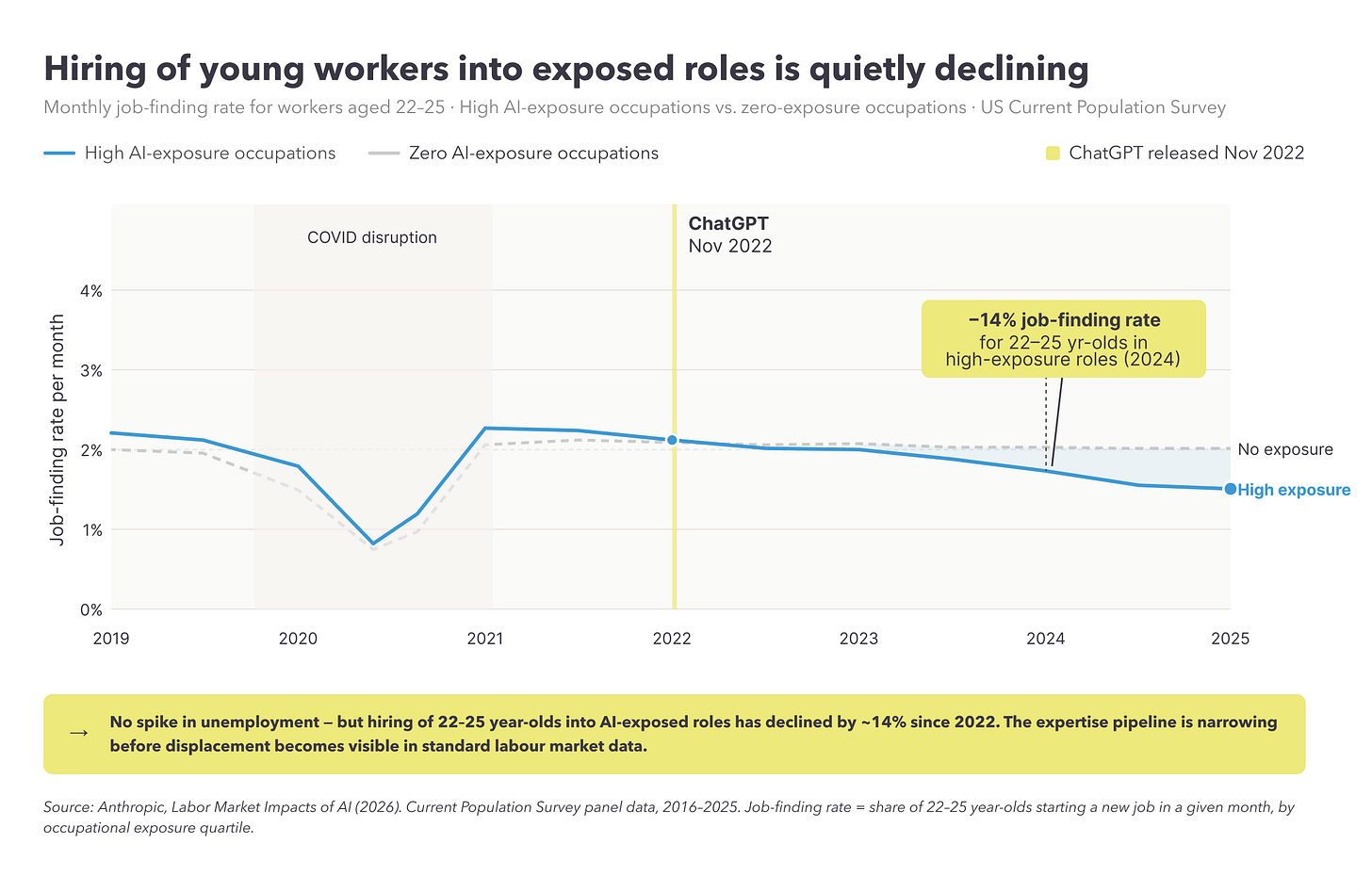

Anthropic’s recent report, Labor Market Impacts of AI: A New Measure and Early Evidence, gives a fairly calm answer. So far, it finds no measurable rise in unemployment in the occupations most exposed to AI since the release of ChatGPT in late 2022. That is useful. It pushes back against some of the more dramatic claims and it is also a reminder that real change in organisations rarely shows up first in the headline numbers.

But what interested me more in the report was something else. It starts with the question of job displacement, but gradually shifts the reader toward another question entirely: not whether AI is replacing work, but whether organisations are moving too slowly to make full use of it.

That may be right. But it is also where the report becomes less neutral.

What the report gets right

There is quite a lot to like in it.

1. It is right to say that unemployment is a poor early signal for this kind of change. Organisations rarely move from stable roles to layoffs in one step. They first slow hiring, change the mix of tasks, leave certain vacancies unfilled and quietly narrow entry paths. By the time unemployment becomes visible, much of the real adjustment has already happened.

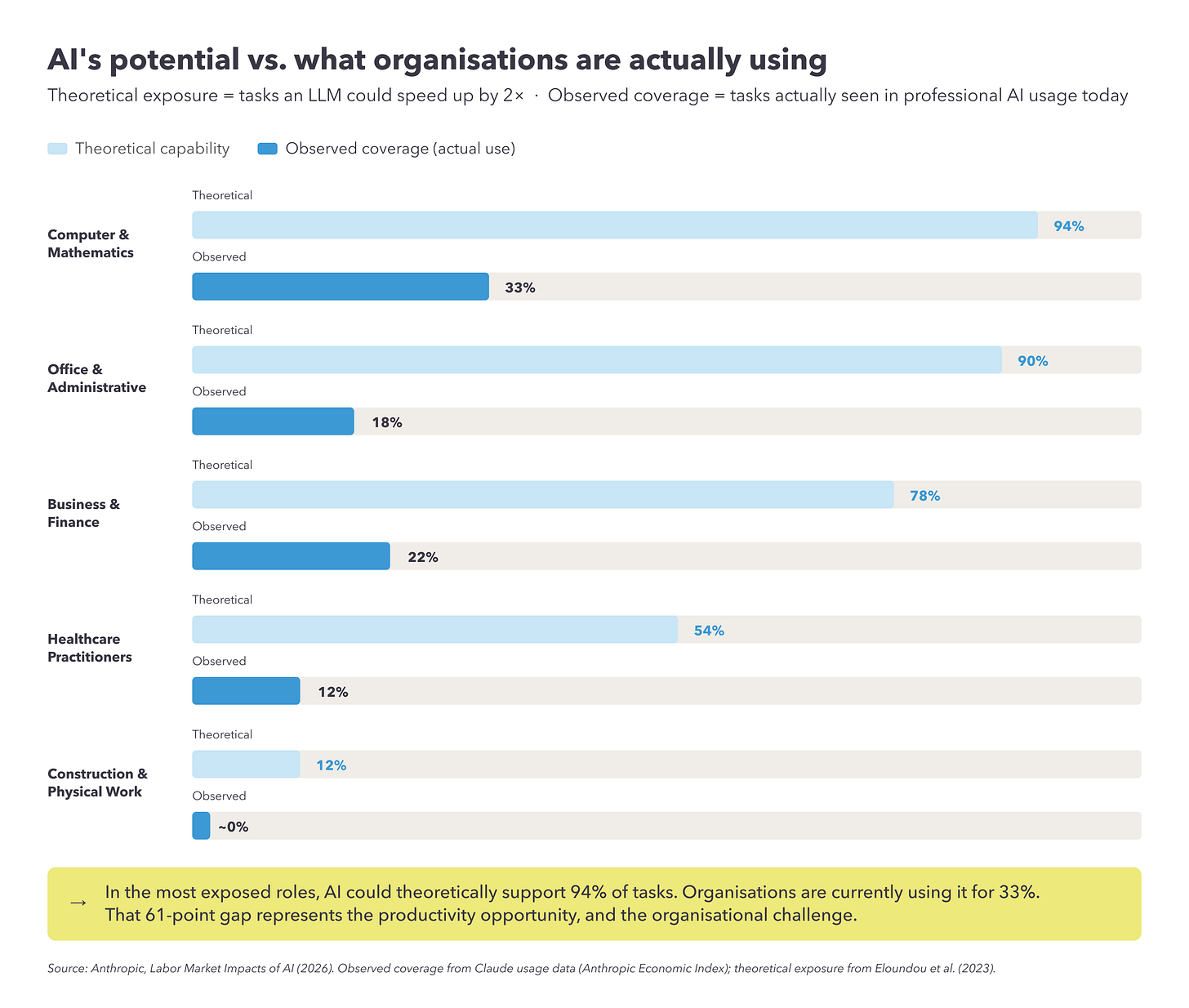

2. The distinction between what AI can theoretically do and what it is actually being used for is very helpful. Too much of the AI debate jumps straight from capability to impact, as if the existence of a tool automatically meant organisational change, it does not. There is always a long distance between something being possible and it becoming part of how work is actually done. Researchers introduce a measure they call observed exposure, which combines theoretical LLM capability with real-world usage data drawn from Anthropic’s platform.

3. The point about younger workers should not be overlooked. If junior roles are starting to weaken in the more exposed occupations, then this matters far beyond hiring statistics. Many organisations still build expertise in the old way: juniors do the groundwork, learn by doing, and over time develop the judgment needed for more senior work. If AI starts absorbing that early layer of work, then the question is not just whether productivity goes up. The question is how expertise will be built from now on. That is a serious issue, and one many companies are not discussing clearly enough.

Where I become more cautious

The report’s most interesting idea is the gap between theoretical exposure and observed usage. In simple terms, AI could do much more than organisations are currently asking it to do. Anthropic then points to this as a sign that the bigger issue may not be displacement, but under-adoption. And this is where I think a bit more caution is needed.

A gap between capability and usage does not only mean that organisations are behind, it can also mean the technology is still not reliable enough in many settings. Or that the cost of checking and verifying output remains high. Or that governance, legal constraints, and workflow integration are more complicated than the capability story suggests. Or simply that some things are technically possible, but not yet worth doing in practice.

That matters because otherwise the argument starts to tilt. The report begins as a study of labour market effects, but it slowly turns into something closer to an adoption argument: the technology is ready, and organisations need to move faster. Maybe. But that is not the only conclusion one could draw from the same data.

The question changes halfway through. That, to me, is the central issue.

The report starts with one question: is AI reducing employment? But much of its energy goes into answering another: why are organisations not using more AI already? Those are both important questions, but they are not the same question.

And when they get blended together, it becomes easier to move from evidence to recommendation without really stopping in between. This is also where the fact that the report comes from Anthropic matters. I say that without cynicism. I think Anthropic has generally taken many of the right positions on privacy, safety, and responsible deployment. But it is still an AI company.

It is not surprising that it would look at the economy and see a large gap between what AI could do and what organisations are currently doing with it. That is, in a way, the commercial story as well as the analytical one. That does not make the report wrong, it just means the framing should not be taken at face value.

What leaders should actually take from it

For me, the real lesson is not simply that organisations need to move faster. It is that they need to think more clearly.

Yes, there is probably a lot of underused potential. Yes, many firms are still stuck at the stage of tools, pilots, and scattered experiments. But speed on its own is not a strategy. Leaders still have to answer some hard questions. Where does AI genuinely improve performance? Where does it create more checking work than value? Which tasks can be automated without weakening the development of people? Which workflows should be redesigned, and which should not? These are management questions, not technology questions.

The issue with junior talent makes this especially important. If firms use AI to reduce the need for entry-level work, that may look efficient in the short term. But organisations do not run on output alone. They run on judgment, and judgment has to come from somewhere. If the old path to building expertise starts to disappear, companies need a new one. Otherwise they may find, a few years from now, that they have improved efficiency while weakening capability.

That is not a minor side effect. It is the kind of thing that shows up late and is hard to reverse.

A more balanced reading

So I think Anthropic’s report is worth reading, and worth taking seriously. It is more thoughtful than much of what gets written on AI and jobs. It usefully reminds us that labour market change can be quiet before it becomes obvious. It makes a good distinction between technical possibility and actual use. And it raises a real concern about younger workers entering exposed fields. But I would stop short of accepting its implied conclusion too quickly.

The gap between what AI can do and what organisations are doing with it is not automatically a sign of failure or delay. It may also reflect sensible caution, unresolved limitations, or the simple fact that work is harder to redesign than slide decks make it seem.

The question is not whether organisations should use more AI. The question is where AI creates real value, where it introduces risk or fragility, and where moving too fast may solve the wrong problem. That is the harder conversation and it is also the more useful one.

Source: Massenkoff, M. and McCrory, P. (2026). Labor Market Impacts of AI: A New Measure and Early Evidence. Anthropic.Here is a more natural