Ethical AI as Competitive Advantage: Why Doing the Right Thing Pays Off

TL;DR:

Responsible AI is no longer a moral choice - it’s a strategic advantage.

Companies that embed ethics into design, governance, and deployment outperform those treating it as risk management.

New data from WEF, HBR, and Reuters shows a clear link between trust, financial resilience, and innovation outcomes.

The Business Case for Ethical AI

For years, responsible AI was something companies handed off to their compliance teams and risk committees, the people worrying about what could go wrong rather than what could go right. But that’s changing fast.

In its 2025 report “Advancing Responsible AI Innovation: A Playbook”, the World Economic Forum makes a clear argument: trust is no longer just a reputational benefit, it’s a business driver. When organizations build fairness, transparency, and accountability into their AI systems from day one, they’re creating the kind of stakeholder confidence that accelerates adoption, collaboration, and growth.

Ethical AI creates value before it prevents harm.

This is especially relevant as AI systems become more embedded in products, services, and decision-making. Whether it’s a recruitment model screening applicants or an algorithm optimizing supply chains, each interaction is a test of credibility.

When customers, employees, and regulators trust how your systems reason, they trust your brand.

And trust compounds in interesting ways. According to the WEF Playbook, it reduces friction in sales conversations, lowers the cost of regulatory oversight, and helps you attract the kind of talent that actually wants to build things responsibly. That’s not soft value, that’s operational advantage.

What Ignoring Ethical AI Actually Costs

The opposite is equally true, and the numbers here are sobering. A 2025 Harvard Business Review study called “6 Ways AI Changed Business in 2024, According to Executives” found something revealing: leaders who rushed into AI without proper governance are hitting the brakes now. More than 70% of surveyed executives admitted they’ve had to pause or completely roll back at least one AI initiative because of ethical, legal, or reputational concerns. Several said they dramatically underestimated what it would cost them to fix bias issues and retrain models after facing public criticism or regulatory pressure.

The executives’ lesson was clear and painful: fixing broken trust costs far more than building it right from the start. Skipping responsible AI doesn’t just create moral or legal headaches, it creates operational drag that slows everything down. You end up with reactive governance, fragmented workflows, and a brand that’s vulnerable to the next controversy. Companies that skipped the early governance work are now spending months rebuilding systems that could have been ethical by design.

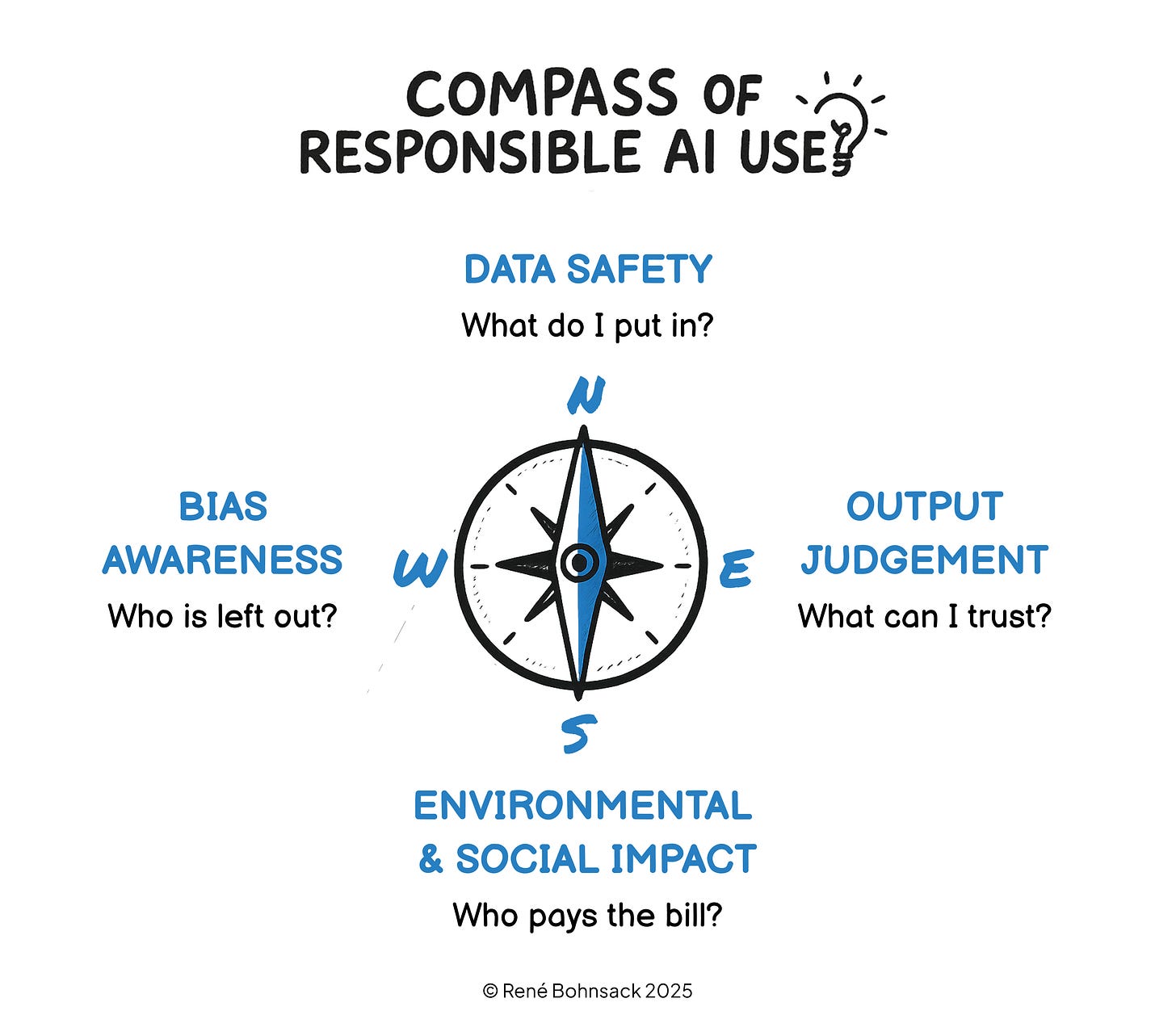

Navigating Responsible AI: The Compass

So how do you actually do responsible AI in practice? It’s not just about having a checklist, it’s more like having a compass. It helps you navigate the inevitable trade-offs between moving fast, building trust, and making real impact by asking the right questions before every deployment.

Think of the AI Compass as a way to translate abstract ethical principles into daily habits. It works for governance at the executive level and for quick judgment calls on the front lines. Executives can use it to evaluate strategic opportunities and risks, while employees can use it as a gut check before they hit Enter on that prompt.

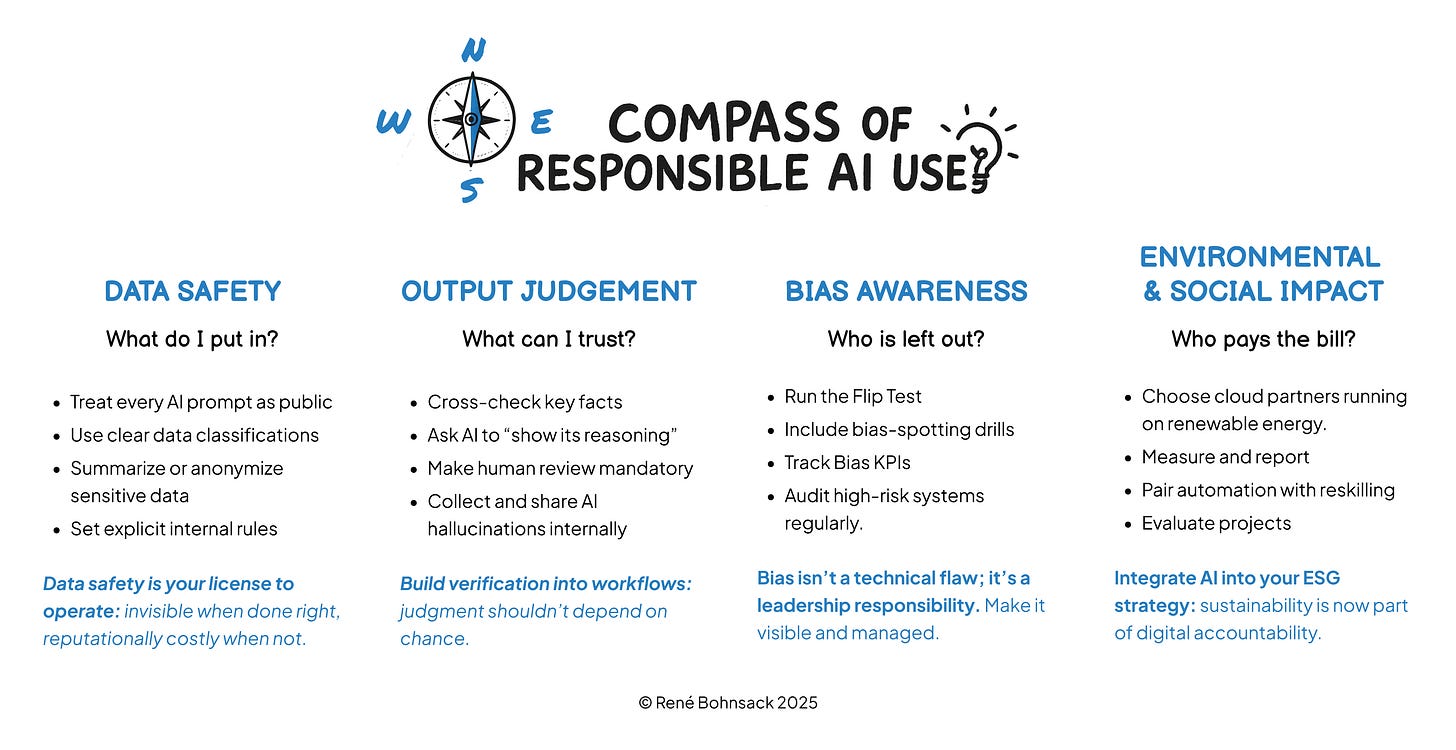

North - Data Safety: What do I put in?

Every AI interaction starts with data, and that data is never neutral. What you feed into the system determines not just the quality of what comes out, but whether your organization stays compliant and credible in the first place.

A compliance officer at a European bank shared a story that perfectly illustrates this. One of their analysts, eager to impress, used ChatGPT to polish up a financial report by pasting raw transaction data into the model. The output looked great and professional. The problem? It was also a serious data breach.

Here are some practical habits for the North direction:

Start by treating every AI prompt like it’s public information. If you wouldn’t email something to a stranger, don’t paste it into a public model.

Use your organization’s data classification system (public, internal, confidential, regulated) to guide what’s safe to share.

When you need to work with sensitive material, summarize it or anonymize it first.

Make sure you have clear internal policies about what can and cannot be shared with generative AI tools.

For executives, here’s the key insight: data safety is your license to operate. When you do it well, nobody notices. When you mess it up, it becomes a headline.

East - Output Judgment: What can I trust?

Just because something sounds fluent doesn’t mean it’s factual. The East direction reminds us that AI can be incredibly convincing even when it’s completely wrong.

Consider the case of two New York lawyers who submitted a legal brief written with ChatGPT. It looked polished and persuasive, exactly what you’d expect from experienced attorneys. But when the judge looked closer, citation were fabricated. The court called them “plausible fictions.”

The critical skill here is what might be called critical prompting: treating every AI output as a first draft, never a final answer.

Make these habits part of your workflow:

Cross-check any critical facts against trusted databases or your own internal sources;

Ask the AI to explain its reasoning (”Walk me through how you reached this conclusion”);

Require human review for anything going to external audiences or into regulated contexts;

When people on your team spot AI hallucinations, encourage them to share and document those moments, they’re incredibly valuable teaching opportunities.

For executives: build verification steps directly into your workflows so they happen automatically. Good judgment shouldn’t depend on people being vigilant every single time, it should be baked into the process itself.

West - Bias Awareness: Who is left out?

Here’s an uncomfortable truth: AI doesn’t eliminate bias, it scales it. A French insurance company discovered their chatbot was systematically delaying claims from customers with foreign-sounding names. The discrimination wasn’t intentionally coded by anyone, it was inherited from biased historical data that reflected past human decisions.

Bias awareness isn’t about achieving some perfect, bias-free state (that’s impossible). It’s about actively detecting patterns of exclusion. It’s shifting your question from “Is this accurate?” to “Accurate for whom?”

Try these practical approaches:

Use what might be called the Flip Test: change a name, gender, or region in an AI-generated result and see if the outcome shifts. If it does, dig deeper to understand why;

Run bias-spotting exercises where employees practice identifying stereotypes or skewed language in AI outputs;

Track bias as a KPI: measure how often outputs get challenged for fairness;

For high-stakes AI systems like recruitment, lending, or healthcare, commission formal bias audits.

For executives, remember this: bias isn’t a technical bug you can patch. It’s a leadership blind spot. Make it visible through dashboards, regular reviews, and public accountability.

South - Environmental & Social Impact: What is the impact of use?

AI might live in the cloud, but its footprint is very much on the ground. Training a large language model can consume as much electricity as powering hundreds of homes for a year, and cooling those massive data centers requires enormous quantities of water. Every deployment you make carries an environmental and social footprint, and leaders need to ask: who’s really paying the bill for our AI strategy?

This isn’t abstract anymore. In Europe, the Corporate Sustainability Reporting Directive (CSRD) now requires companies to disclose their indirect digital emissions (Scope 3 in sustainability reporting). In the Gulf region, where water is scarce, data centers are literally competing with agriculture and municipalities for cooling water. The impact is operational and immediate.

Here’s how to practice the South direction:

Choose cloud providers that run on renewable energy;

Track and disclose your AI-related carbon and water usage in your ESG reports;

When you automate processes, pair that with reskilling initiatives to help offset potential job displacement;

Evaluate each major project through a triple-impact lens: financial return, social benefit, and environmental footprint.

For executives: integrate AI into your ESG strategy, not as something separate from it. What’s invisible in your IT budgets is increasingly visible in investor reports and stakeholder expectations.

Bringing the Compass to Life

The AI Compass works both as a governance instrument and as a cultural signal. It shows everyone that responsible AI isn’t an afterthought, it’s genuinely how your organization makes decisions. It also gives employees permission to pause and question rather than just executing orders blindly.

You can embed the Compass into your daily operations in several ways. Include it in onboarding so every new employee learns the four directions and what they mean in practice. Add a “Compass Check” to your dashboards before major deployments. Integrate it into your templates, for example, prompt templates could include ethical checkpoints like “Have you verified data safety? Have you checked for bias?”. Feature one Compass direction each month in your internal communications or town halls to keep it top of mind.

When ethics becomes muscle memory across your organization, responsibility scales effortlessly. And when it scales, it becomes a genuine competitive advantage rather than just a cost center.

How to Convert Ethics into Business Value

Ethics pays off when you operationalize it, when you move it from principle to practice. Recent data from a Reuters analysis of an EY global survey from October 2025 drives this home: “Most companies suffer some risk-related financial loss deploying AI.” The report found that 57% of enterprises experienced direct financial impact from compliance fines, reputational damage, or failed AI deployments, all because they didn’t have adequate responsible AI frameworks in place.

But here’s the encouraging flip side. The same study found that companies with clear accountability models, bias testing protocols, and regular audit processes were twice as likely to report financial gains from their AI innovation. What made the difference? They made responsibility a design parameter from the beginning, not something to fix after things went wrong.

Here’s what that actually looks like in practice: Embed governance early by involving legal, data ethics, and operational leaders in model design, not just at deployment. Train for ethical literacy so every employee using AI tools can recognize bias, validate outputs, and know when to escalate issues. And measure trust as a KPI alongside your usual metrics, track stakeholder confidence, explainability scores, and how quickly you recover from incidents, not just accuracy and ROI.

These aren’t soft measures. They’re emerging as the next layer of competitive differentiation in the AI space.

From Compliance to Confidence

The future of AI leadership won’t be defined by who automates the fastest. It’ll be defined by who earns the most durable trust over time. The organizations that thrive will treat responsibility as infrastructure, not as a separate program, but as a capability woven throughout their data practices, culture, and design processes.

This is the shift we’re already seeing among the most advanced companies, those balancing speed with intentionality across their AI transformation journeys. They understand something fundamental: innovation without ethics doesn’t scale sustainably. You might get quick wins, but they don’t last.

Responsible AI isn’t a brake on progress, it’s the steering wheel. It doesn’t slow innovation down; it directs it toward the kind of impact that actually lasts.